Hybrid AI Assistant – Evolution of today’s Agents

I wrote this article myself and then asked AI to improve my English.

This is my personal view as solution architect, building cloud software and data platforms for 20yr. It’s more like a thought on how I see Actor in 1-2 years.

AI assistants become more capable, the underlying architecture becomes just as important as the models themselves. Currently Agents evaluation is made on models used, framework and prompts.

For systems like Actor, designed to operate across emails, calendars, documents, and business workflows a purely cloud-based or purely local approach quickly reaches its limits.

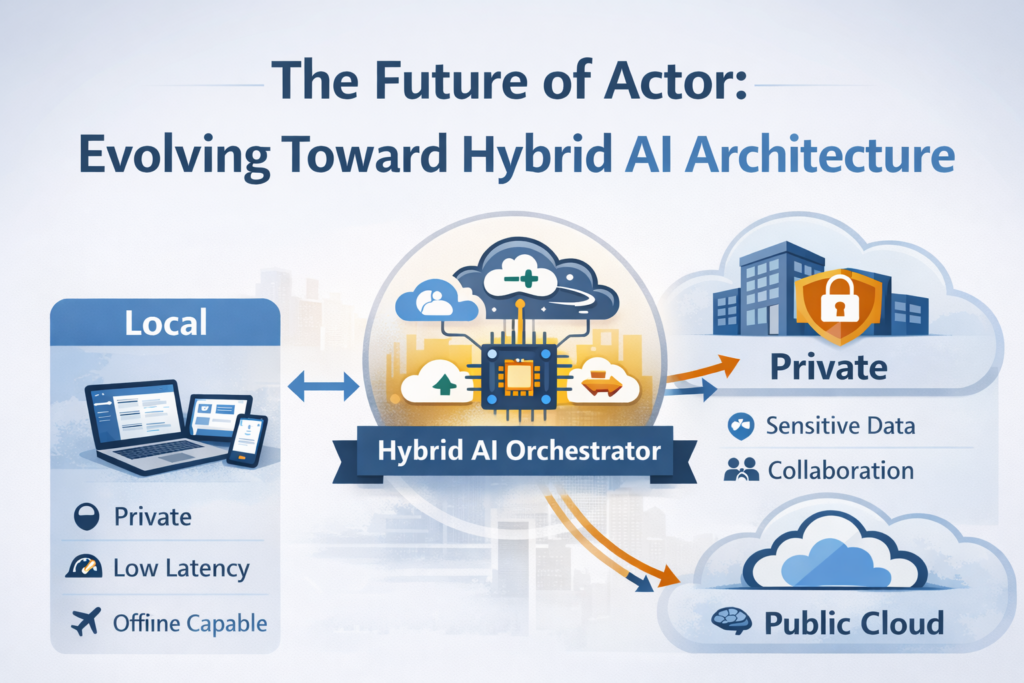

What starts to emerge instead is a hybrid architecture, where intelligence and execution are distributed across local devices, private infrastructure, and cloud models.

This is less about technology preference and more about architectural necessity.

The limits of a pure Cloud Assistant

Most current AI assistants operate primarily in the cloud. This makes sense initially: models are large, infrastructure is centralized, and updates are easy. Also inference is cheap (or at least for some models)

But once an assistant becomes deeply integrated with work systems, a few structural issues appear.

First, data boundaries matter.

Work assistants interact with highly sensitive information: financial documents, internal emails, contracts, product roadmaps, HR discussions. Many organizations cannot legally or operationally stream this data continuously to external infrastructure.

Then connectivity becomes a dependency.

A work assistant that stops functioning on a plane, in a train tunnel, or during network issues becomes unreliable for day-to-day operations.

Cloud AI is powerful, but it cannot realistically handle every layer of the system.

The Limits of a Pure Local Assistant

At the opposite end, running everything locally also runs into hard constraints.

The most capable reasoning models still require significant compute infrastructure. While local hardware is improving quickly, Apple Silicon, NPUs, and dedicated AI chips, running frontier models for complex reasoning or multi-document synthesis remains impractical on most devices.

Second, latency compounds in agent workflows.

An assistant that performs multiple steps like reading an email, extracting tasks, checking the calendar, drafting a reply, scheduling follow-ups, creates a chain of model calls and API interactions. Even small delays add up when actions are sequential.

There is also a governance challenge.

Managing models across thousands of employee devices introduces operational complexity: updates, security patches, configuration management, and compliance monitoring.

Finally, many assistant workflows require shared state.

A project timeline, team knowledge base, or collaborative task system cannot live entirely on one person’s machine.

Local intelligence alone cannot provide the full picture either.

A Likely Direction: Distributed Intelligence

A more natural architecture for assistants like Actor is a distributed intelligence model, where different layers of the system run in different environments.

A simplified breakdown might look like this:

Local layer (device runtime)

Responsible for awareness of the user’s environment and fast actions.

Typical responsibilities:

- accessing local files, apps, and notifications

- detecting contextual signals (active documents, meetings, emails)

- executing fast, private actions

- caching frequently used knowledge

Private infrastructure (organization or user cloud)

Responsible for shared state and sensitive data processing.

Typical responsibilities:

- company knowledge bases

- shared agent memory

- team collaboration context

- sensitive document processing

Public cloud models

Responsible for heavy reasoning and large-scale knowledge.

Typical responsibilities:

- deep reasoning tasks

- complex synthesis across many documents

- large knowledge retrieval

- advanced language capabilities

In this model, the local runtime acts as the orchestrator, deciding where each task should execute.

The Importance of the Trust Boundary

One of the most important design decisions becomes defining the trust boundary.

In practical terms this means answering questions such as:

- What data never leaves the device?

- What data can move to private infrastructure?

- What tasks can safely use external models?

- How are these decisions logged and audited?

This boundary becomes critical for enterprise adoption.

Organizations do not just want AI capabilities — they want predictability and control over where data flows.

Why This Architecture Is Likely to Become Standard

Several industry trends point toward this direction.

Hardware acceleration is improving rapidly on local devices, enabling lightweight models and fast local inference.

At the same time, frontier model capabilities continue to scale in cloud environments, maintaining a gap that makes cloud reasoning attractive for complex tasks.

Enterprises increasingly require data governance guarantees, pushing AI systems toward architectures that can respect internal boundaries.

Finally, emerging agent frameworks are already built around multi-model routing, where different models and execution environments handle different tasks.

Hybrid architectures naturally align with this pattern.

What This Means for Actor

For Actor specifically, a hybrid architecture could unlock several capabilities:

A local runtime could continuously understand the user’s environment, email context, meetings, documents without needing to transmit everything externally.

A private Actor infrastructure layer could maintain user memory, task graphs, and collaborative context.

Cloud models could then be invoked selectively for tasks that genuinely require deeper reasoning.

Instead of a single AI endpoint, Actor becomes a system that coordinates intelligence across layers.

This architecture also allows users and organizations to choose different deployment strategies depending on their needs:

- personal cloud

- company infrastructure

- hybrid local + cloud

- fully private deployments

In other words, Actor evolves from being just an assistant to becoming a distributed cognitive layer for work.

Looking Ahead

The future of work assistants will likely not be defined by a single model or provider.

Instead, it will be shaped by how intelligence is orchestrated across environments.

Systems that can combine privacy, responsiveness, and powerful reasoning will have a structural advantage.

Hybrid architectures appear to be one of the most promising paths to achieve that balance.

PS: I’m rebuilding the whole architecture to be ready for a hybrid model in the near future. It’s a lot of work, it will take some time, however it’s my passion.